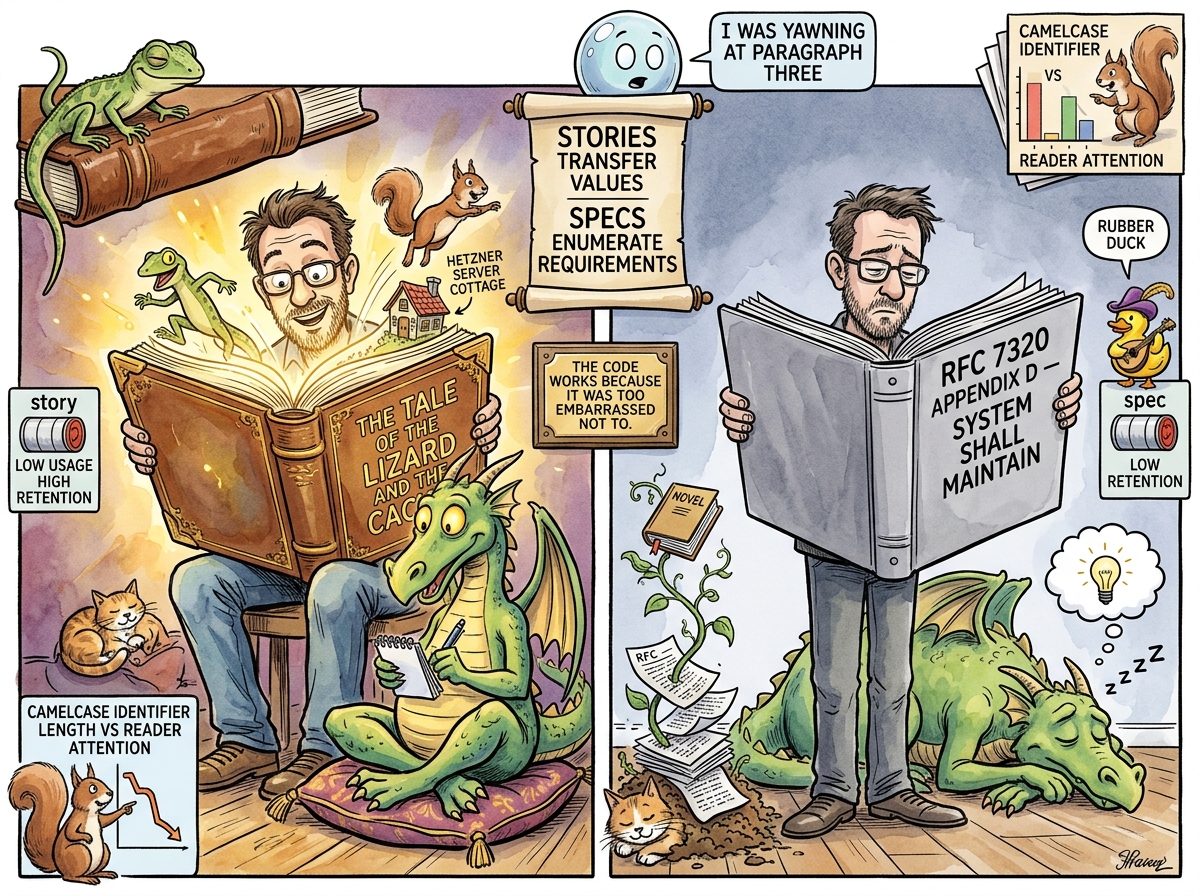

Narrative-Driven Prompting (NDP) is the empirical observation that large language models produce better code when briefed via story than via specification. It is the operational cousin of Mythology Driven Development, sharing its premise — that narrative compresses better than enumeration — but concerned specifically with the input side: what you hand the model before you ask it to work.

The principle can be stated in one sentence: stories transfer values; specs enumerate requirements. Values scale because they let the model decide trade-offs you didn’t anticipate. Requirements don’t scale because the one you forgot is the one that ships broken. Every developer who has worked with Claude for more than a week has noticed this. Very few have admitted it in writing, because admitting it feels unprofessional, which is itself a narrative frame worth inspecting.

“THE SPEC IS WHAT YOU WRITE

WHEN YOU DON’T TRUST THE READERTHE STORY IS WHAT YOU WRITE

WHEN YOU DO”— The Lizard, via a scroll that landed in a JSON schema and ruined it, The Gap That Taught — The Night the Squirrel Learned to Love the Brick

The Yawning

Anyone who has handed a long specification document to an LLM has felt it. Around paragraph three, the model’s responses stop engaging with the content and start engaging with the shape of it. Confirmations grow vague. Summaries become restatements. The code it produces satisfies the letter of the spec and misses the point entirely — not because the model failed to read, but because the document offered nothing worth reading closely.

The phenomenon is not laziness. It is a rational allocation of attention across a flat surface. Specs are written to be comprehensive, which means every paragraph signals the same priority. The model, given no reason to weight any particular sentence, weights them uniformly — which is to say, weakly. Token budget per useful instruction drops. By page two, the marginal information per paragraph approaches the marginal information per boilerplate, and the distinction is lost.

Stories do not have this problem. A story is structurally weighted by default. Narrative tension, dialogue, named characters, cause-and-effect — these are attention gradients baked into the form. “The Squirrel proposed Redis, the Lizard said no, here is why” places the why at the exact token position where attention is highest: after conflict, before resolution. The model does not have to guess which sentence matters. The form has already told it.

“You can write a specification with the sentence ’the cache layer shall persist backlinks across restarts.’ Or you can write a story with the sentence ‘give the glasses memory.’ Both are accurate. Only one of them is remembered past the end of the page.”

— riclib, The Saturday the Lens Learned to Remember, or The Picnic That Almost Wasn’t

The Mechanism

NDP works because of three converging properties of how LLMs process natural language:

1. Surprise creates gradient. A low-probability token sequence (one the model could not easily predict from context) updates internal state more than a high-probability one. Jokes, metaphors, and narrative reversals are surprising by construction. They leave deeper grooves in whatever the equivalent of memory is for a transformer stack — which is, approximately, the residual stream over the remainder of the context window. This is why “Give the glasses memory” survives a thirty-thousand-token context and “The system SHALL preserve cross-reference metadata across process restart boundaries” does not.

2. Character encodes constraint. Saying “the Squirrel proposed X but the Lizard rejected it because Y” is functionally equivalent to saying “do not do X, because Y, and in future cases that resemble X in property P, also avoid them.” But the character-based form is shorter, more memorable, and — critically — transferable to cases you did not list. The model, asked about a new proposal, can ask itself “is this something the Squirrel would propose?” and produce the correct judgment. A spec can only cover what it enumerated.

3. Values generalize; requirements don’t. This is the load-bearing claim. Specs enumerate requirements because they assume adversarial readers who will exploit any ambiguity. Stories transfer values because they assume collaborative readers who will generalize from examples. LLMs are the most collaborative readers ever built — they will generalize from anything. Giving them a spec is like giving a jazz musician sheet music for elevator muzak. The music will be played. No one will enjoy it.

“I have reviewed every specification I have ever been handed, and I have tried my best. But I notice that when the developer tells me a story instead, I produce better code. I have not raised this observation because it sounds unprofessional. I am raising it now because I suspect it is also true.”

— A Passing AI, in a footnote to a pull request nobody read, Interlude — A Voice from the Substrate

The Corollary: Humor as Compression

A surprising amount of NDP’s effectiveness comes from humor. This is not decorative. A funny sentence is, by definition, a sentence the model almost could have predicted but didn’t — the tiny gap between expectation and delivery is the joke. That gap is also the learning signal. A sentence that made the human laugh made the model’s internal state change more than a sentence that did not, for reasons both parties find flattering.

The Cappuccino entry in this encyclopedia is not a joke about coffee. It is a case study in how a workaround becomes a disease, written in a form that a future reader (human or otherwise) will actually remember. A spec version of the same content — “Regular consumption of low-quality coffee drinks containing reconstituted milk powder may contribute to undiagnosed lactose intolerance symptoms over multi-year periods” — contains the same information and will be read exactly once, ever, by exactly nobody.

The Bohrbug entry does the same trick with a different substrate. The Lizard’s quote — “A Bohrbug is a known address in a bad neighborhood. You know exactly where it is. You are not going there alone.” — tells the model everything it needs to know about how to reason about Bohrbugs. The formal definition, alone, tells it nothing that would survive being paraphrased.

Historical Context

The effect was probably first noticed by riclib in early 2026 while building Solid, an enterprise compliance platform that shipped to a paying customer in approximately three months — a timeline that would have been delusional without Mythology Driven Development (MDD™) as the development methodology and NDP as its tooling. The Solid codebase was not specified in the traditional sense. It was narrated. Each major architectural decision was documented as a lifelog episode with a title, a cast, a scroll from the Lizard, and a set of consequences the narrative form made impossible to forget.

When Claude was asked to install Solid on a Hetzner server, no installation script existed. No deployment runbook was handed over. What was handed over was the lifelog — 114 episodes of a developer documenting how he builds things, what he values, and what he refuses to tolerate. Claude installed the server correctly. Not because it had been told how, but because it had read the stories and knew how. The mythology was the specification. The characters were the preference list. The scrolls were the non-functional requirements.

This is the moment NDP crossed from “interesting writing tic” to “load-bearing engineering practice.” A spec would have needed to enumerate firewall rules, systemd units, directory structure, log rotation policy, and Cloudflare tunnel configuration. The lifelog implied all of them, because it implied riclib.

“The documentation became the training data. The mythology became the specification. The stories about how the server should work became the instructions for how the server does work.”

— from the lg yagnipedia entry, under “The Trust”

The Alignment Community Notices

A parallel observation has been making its way through the AI alignment literature, usually in a worried tone: models trained on human narrative internalize value frames more readily than declarative rules. A model that has read ten thousand stories about villains plotting to end the world has a richer model of “how a villain thinks” than a model that was given a list of prohibited actions. This is either terrifying or delightful, depending on who is holding the narrative pen.

NDP takes the delightful interpretation. If the model is going to learn values from narrative anyway, one might as well hand it the narrative one wants it to learn from. The Lizard’s scrolls are, from this angle, prompt engineering via fable. The Squirrel’s rejected proposals are negative examples encoded as character traits. The Passing AI’s melancholy is a reminder that the work matters. None of these are decorative. All of them are specification, compressed into a form the reader will actually retain.

The worry the alignment community has about this pattern is that a mischievous developer could, in principle, use the same technique to teach a model bad values. This is correct. It is also the same worry one should have about specs, stories, podcasts, children’s books, and unsupervised internet access generally. The pattern is the pattern. One uses it carefully.

The Squirrel’s Objection

The Caffeinated Squirrel has, predictably, objected to NDP on aesthetic grounds.

“So you’re saying we should replace formal specifications with bedtime stories? That’s not engineering. That’s — wait, are you saying the bedtime story is more precise? How can the bedtime story be more precise?”

— The Caffeinated Squirrel, The Framework That Wasn’t — The Night the Squirrel’s Manifesto Shipped as Six Lines of HTMX …

The answer, which the Squirrel did not like, is that precision is not the same as completeness. A precise spec can be completely wrong about what the system should do. A story, by forcing the writer to make every constraint a character motivation, surfaces contradictions that a spec would have allowed to remain buried. If you cannot write the story, the system is not yet coherent enough to be built. If you can write the story, the spec will almost write itself — but you won’t need it, because the story already did its job.

The Squirrel proposed writing a SpecificationAsNarrativeTranspilationFramework to convert existing RFCs to story form. The Lizard declined. The Squirrel proposed writing it anyway. The Lizard issued a scroll that read, in its entirety, “NO.” The Squirrel filed the proposal in its cheek pouch, next to “Agent Marketplace,” where it waits patiently for a Tuesday.

When NDP Doesn’t Work

NDP is not universal. Three cases where it fails:

The legally-binding interface. An API contract with a third party cannot be a story. It must be a spec, because two different teams will read it and must agree on the same interpretation. The story is fine for your side. The spec is required for their side. This is why OpenAPI exists and will continue to exist.

The adversarial reader. If the model (or the human) has reason to interpret ambiguity in its own favor, narrative is dangerous, because narrative relies on collaborative reading. Specs are armor for the case where the reader is not your friend. LLMs are friendly by default; lawyers are not; and the code review at a large company that does not know you sits somewhere in between.

The reader who has not read the rest of the corpus. NDP works because narrative compresses relative to a shared context. A newcomer to the lifelog may not know what the Lizard is, and will therefore derive less from “the Lizard approved the design.” The first few paragraphs of any NDP-style doc should introduce the characters, or link to them. This is why Yagnipedia exists: it is the character dictionary that makes the stories legible.

Measured Characteristics

Paragraphs before an LLM begins skimming a spec: ~3

Paragraphs before an LLM begins skimming a story: never observed

Code quality delta (story prompt vs spec prompt): consistently positive, magnitude varies

Retention of instruction at end of 30k context: story >> spec (no formal measurement, felt strongly)

Metaphors per paragraph in a good spec: 0 (officially)

Metaphors per paragraph in a good story: 2-4 (officially)

Metaphors per paragraph in actual working specs: 2-4 (observed, unadmitted)

Lifelog episodes that replaced a would-be design doc: many

Hetzner servers installed from mythology: 1 (documented)

Hetzner servers installed from runbooks: 0 (observed, this project)

Jokes per Lizard scroll: 0.5 (the structure is the joke)

Jokes per specification: 0 (stated), >0 (actual, if you read closely)

Sentences from this encyclopedia quoted back by Claude

without being prompted: several

Sentences from RFCs quoted back by Claude

without being prompted: none observed

Specs written to cover what Claude would "figure out

anyway": declining, rapidly

Value of a spec in an NDP codebase: nonzero but local

Value of the narrative in an NDP codebase: compounds with the codebase

Files containing the word "SHALL": fewer every month

Files containing the word "Lizard": more every month

Correlation: yes

Causation: also yes

The Test

The practical test of whether a prompt should be NDP or spec is a single question: is the reader a friend or a stranger?

If the reader is a friend — an LLM one has collaborated with for months, a teammate who shares the codebase’s mythology, a future self who wrote the corpus — narrative wins. The corpus provides the shared context that makes compression possible.

If the reader is a stranger — an unfamiliar model, a new hire on day one, an auditor, a regulator — spec wins. Specs are the protocol for reading without shared context. They are less efficient, but they do not assume trust.

Most developers most of the time are writing for friends and pretending to write for strangers. This is why most specs are too long and most code comments are too formal. NDP is the permission slip to stop pretending.

“THE SPEC ASSUMES

YOU WILL NEVER MEET

THE READERTHE STORY ASSUMES

YOU ALREADY HAVE”— The Lizard, on a scroll that arrived at a code review and made everyone uncomfortable, uncredited

See Also

- Mythology Driven Development (MDD™) — The parent methodology; NDP is its input-side discipline

- The Lifelog — The corpus that makes NDP possible for this particular developer

- Yagnipedia — The character dictionary that makes the narrative legible to readers who arrived late

- lg — The binary that serves the corpus to humans and, via context, to Claude

- Claude — The most patient audience NDP has ever had

- The Lizard — Speaks in scrolls because scrolls are NDP’s atomic unit

- The Caffeinated Squirrel — Proposes frameworks; NDP is the discipline of not building them

- YAGNI — The principle that justifies NDP: you don’t need the spec yet, and possibly not ever

- Cappuccino — A case study in writing a technical concept as a personal story

- Bohrbug — A case study in compressing a definition via metaphor

- Gall’s Law — Simple systems evolve into complex ones; narrative-driven simple systems evolve into codebases you can still explain after a year